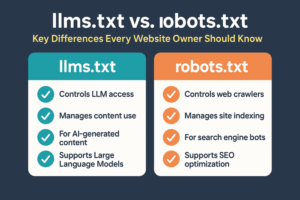

If you run a website, you’ve probably heard of robots.txt. Now there’s a new file making waves—llms.txt. They both live in your domain’s root and guide how automated systems interact with your content. But their purpose, audience, and scope are different.

This guide breaks down the differences clearly, shows you when to use each, and explains how they can work together to protect your content while keeping your site search-friendly.

Understanding robots.txt

What It Does

-

Purpose: Tells search engine crawlers (Googlebot, Bingbot, etc.) which pages or files they can or can’t crawl.

-

Impact: Directly affects indexing and SEO visibility.

-

Supported By: Almost all major search engines.

Example robots.txt

User-agent: * Disallow: /private/ Allow: /public/

Understanding llms.txt

What It Does

-

Purpose: Tells AI language models how they can use your site’s content—whether to reference, ignore, or limit access.

-

Impact: Affects AI model training and content generation, not search engine rankings.

-

Supported By: Increasingly adopted by AI platforms like OpenAI.

Example llms.txt

User-agent: * Disallow: /exclusive-content/ Allow: /free-resources/

llms.txt vs. robots.txt: Side-by-Side Comparison

| Feature | robots.txt | llms.txt |

|---|---|---|

| Primary Purpose | Control web crawler indexing and SEO visibility | Control AI content usage and referencing |

| Audience | Search engine crawlers | AI models (LLMs) |

| Effect on SEO | Direct impact on indexing | No direct SEO impact |

| File Location | Root directory of your domain | Root directory of your domain |

| Standardization | Fully standardized, decades-old | Emerging, not yet universally enforced |

| Typical Commands | Allow, Disallow, Crawl-delay |

Allow, Disallow, optional AI-specific directives |

When to Use Each

Use robots.txt if:

-

You need to hide non-public pages from search engine results.

-

You want to optimize crawl efficiency for SEO.

Use llms.txt if:

-

You want to control how AI tools and chatbots interact with your site content.

-

You want to prevent AI training on specific content.

Can You Use Both?

Absolutely—and you probably should. They work independently but complement each other:

-

robots.txt for search engines

-

llms.txt for AI models

Example of both together:

robots.txt User-agent: * Disallow: /private/ llms.txt User-agent: * Disallow: /confidential/

Implementation Best Practices

Keep Files Simple

Too many complex directives can cause unintended blocking.

Regularly Review Directives

As your site evolves, so should these files.

Test Accessibility

Use browser or terminal commands:

curl https://yourdomain.com/robots.txt curl https://yourdomain.com/llms.txt

Common Mistakes to Avoid

-

Blocking essential pages in robots.txt (hurts SEO).

-

Relying on llms.txt for privacy—AI compliance is voluntary right now.

-

Forgetting to upload to the root—subfolder placement won’t work.

Backlinks & Resources

Key Takeaways

-

robots.txt = SEO & web crawler control.

-

llms.txt = AI content usage control.

-

They’re complementary, not interchangeable.

-

Keep them clear, minimal, and up-to-date.

FAQ

1. Does llms.txt affect SEO rankings?

No—it’s not for search engines. It’s for AI models.

2. Can AI ignore llms.txt?

Yes—compliance is voluntary until it becomes an industry standard.

3. Is robots.txt legally binding?

No—but most search engines respect it fully.

4. Should I use the same directives in both?

Not necessarily—tailor them to their respective audiences.

Conclusion

If you want complete control over how both search engines and AI interact with your content, you need both files. robots.txt keeps your SEO house in order; llms.txt ensures AI plays by your rules. Maintain them well, and you’ll protect your visibility and your intellectual property at the same time.